Meta Leverages AI Agents to Solve the “Tribal Knowledge” Crisis in Engineering

A new report from Meta’s engineering team highlights a transformative shift in software development: using AI agents to map and retrieve “tribal knowledge” within massive, complex codebases. By moving the focus from code generation to system comprehension, Meta claims to have drastically reduced the time engineers spend investigating legacy systems.

From Two Days to 30 Minutes

The primary challenge in large-scale engineering is not just writing code, but understanding the thousands of undocumented decisions, historical fixes, and scattered workarounds that define a mature system. Traditionally, this required engineers to manually parse through chat logs, design docs, and issue trackers—a process that could take days.

According to Meta’s internal testing data:

-

Investigation Time: Tasks that previously required two days of research are now completed in approximately 30 minutes.

-

Efficiency: The AI system reduced internal tool usage by 40 percent by pre-computing and surfacing relevant context before a developer even begins a query.

-

Contextual Mapping: Unlike standard search tools, these AI agents build a structured “internal map” of service relationships, dependencies, and historical changes.

Understanding Over Generation

While tools like GitHub Copilot have focused on generating syntax and boilerplate, Meta’s approach treats AI as a knowledge assistant. Engineers can query the system in plain language to understand why a system behaves a certain way or to assess the risks of a specific dependency.

Meta achieves this by pre-computing context. By continuously processing version control history and internal communications, the agent prepares structured representations of the system. This allows the AI to respond with higher reasoning and fewer calls to external databases, leading to faster and more accurate developer support.

The Challenges of Data Quality

Despite the measurable gains, the report acknowledges significant hurdles for the broader industry:

-

The Garbage-In, Garbage-Out Risk: AI agents are only as reliable as the documentation they ingest. Incomplete or contradictory internal logs can lead to misinterpreted context.

-

Scale vs. Accessibility: While Meta has the resources to build proprietary knowledge maps, smaller organizations may struggle to implement similar agentic workflows.

-

Trust and Verification: Meta emphasizes that these agents are not replacements for human expertise; engineers must still verify AI-generated summaries to ensure critical details aren’t missed.

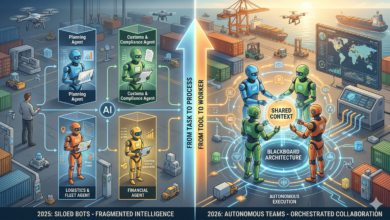

The Shift Toward “Stateless” Engineering

By automating the retrieval of tribal knowledge, Meta is effectively attempting to make engineering “stateless.” Usually, when a senior developer leaves a company, they take a “state” of knowledge with them that is difficult to replace. If AI agents can successfully index the reasoning behind code rather than just the code itself, they mitigate the risk of “knowledge silos.”

However, there is a subtle danger: if teams rely too heavily on AI to explain their systems, the incentive to write clear, human-readable documentation may vanish entirely. We could enter a cycle where code is written by AI, documented by AI, and understood only by AI—leaving the human engineer as a mere “reviewer” of a system they no longer truly comprehend. Meta’s 40% reduction in tool usage is a win for productivity, but the long-term test will be whether this improves code quality or simply increases the speed at which we accumulate technical debt.

How do you see the role of “tribal knowledge” changing in your own workflow—should AI focus on documenting the past or just predicting the next line of code?